AI in Medical Imaging: When the Machine Is Right and the Pathologist Is Not

The integration of artificial intelligence (AI) into medical imaging is no longer a future prospect. In radiology, AI is already routinely used as a decision-support tool, while digital pathology is rapidly moving in the same direction. This evolution raises not only clinical and organizational questions, but also fundamental legal implications, especially when human and machine arrive at different conclusions.

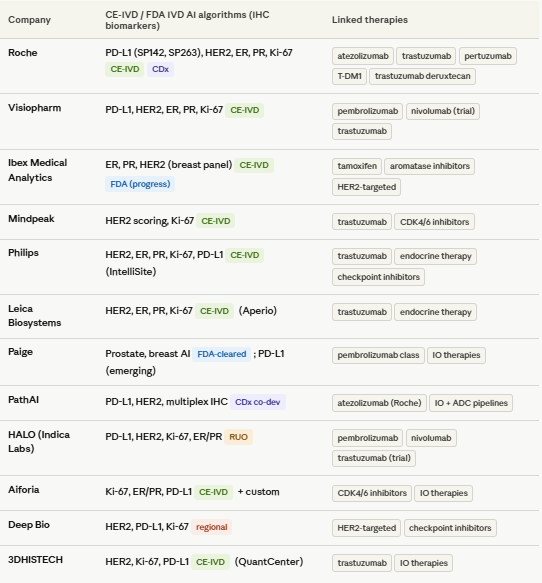

As preparation for this blog article I reviewed the intended use claims of the digital pathology companion diagnostic biomarker AI algorithms on the market with CE-IVD or FDA approvals for patient targeted therapy selection, and they consistently consider themselves to be a ‘diagnostic aid’ and leave the ultimate diagnostic decision with the pathologist. Essentially all CE-IVD and FDA-cleared digital pathology AI algorithms used for IHC (e.g., PD-L1, HER2, ER/PR, Ki-67), the labeling and intended use statements make it clear that:

The interpreting pathologist retains final responsibility for the diagnosis and scoring.

This is a regulatory requirement and risk-control principle.

This seems to have no impact on the legal responsibility of the diagnosing pathologist, but this is perceived differently in case of litigation.

Radiology as a frontrunner: a legal experiment in real time

Radiology provides a compelling precedent. AI systems are increasingly capable of detecting abnormalities on CT and MRI scans with high sensitivity and are being embedded into clinical workflows. This has created a new reality: not only the diagnosis itself matters, but also how it was reached.

Recent research published in Nature Health demonstrates that in case of a medical error perceptions of liability are strongly influenced by the interaction between physician and AI. In a simulated malpractice case in which a radiologist missed an intracranial haemorrhage that was correctly identified by AI, jurors were significantly more likely to find the physician liable when the images were reviewed only once after AI input. When the same physician reviewed the images twice (before and after AI feedback), perceived liability decreased substantially.

Notably, the clinical outcome was identical in both scenarios. The only difference was the workflow.

Radiology as a frontrunner: a legal experiment in real time

Radiology provides a compelling precedent. AI systems are increasingly capable of detecting abnormalities on CT and MRI scans with high sensitivity and are being embedded into clinical workflows. This has created a new reality: not only the diagnosis itself matters, but also how it was reached.

Recent research published in Nature Health demonstrates that in case of a medical error perceptions of liability are strongly influenced by the interaction between physician and AI. In a simulated malpractice case in which a radiologist missed an intracranial haemorrhage that was correctly identified by AI, jurors were significantly more likely to find the physician liable when the images were reviewed only once after AI input. When the same physician reviewed the images twice (before and after AI feedback), perceived liability decreased substantially.

Notably, the clinical outcome was identical in both scenarios. The only difference was the workflow.

The “AI penalty”: when deviating from the machine becomes risky

These findings align with earlier research showing that physicians are judged more harshly when they deviate from an AI system that ultimately proves to be correct. In such cases, what is sometimes referred to as the “AI penalty” emerges: an increased likelihood of being found liable because a seemingly superior second reader was disregarded.

This has far-reaching implications:

The standard of care shifts implicitly: it becomes increasingly difficult to justify ignoring AI output.

Clinical autonomy comes under pressure from responsibility risk avoidance: physicians may act more defensively and follow AI recommendations even when uncertain.

Legal assessments become more context-dependent: not only the error itself, but also the interaction with AI is scrutinized.

From radiology to digital pathology

Although AI is less widely implemented in digital pathology today, the parallels are clear. Both fields rely on pattern recognition, interpretation of complex visual data, and probabilistic outputs. Once AI systems consistently detect abnormalities that pathologists miss, the same legal logic will inevitably apply.

In other words, what is currently observed in radiology is likely a precursor of what pathologists will soon encounter.

A publication by Wouter Bulten in 2022 already has the information that will drive our use of AI algorithms. The PANDA challenge assessed the performance of AI algorithms to correctly classify prostate cancer based on HE stained tissue biopsies using 10,616 digitized prostate biopsies. It is important to note this study included both well validated as completely new AI algorithms for Gleason grading and validated that a diverse set of submitted algorithms reached pathologist-level performance on independent cross-continental cohorts. To compare algorithms’ performances with those of general pathologists, the authors obtained reviews from two panels of pathologists on subsets of the internal and US external validation sets. For the Dutch part of the internal validation set, 13 pathologists from 8 countries (7 from Europe and 6 outside of Europe) reviewed 70 cases. For the US external validation set, 20 US board-certified pathologists reviewed 237 cases. On average, the algorithms missed 1.0% of cancers, whereas the pathologists missed 1.8%. With this in mind it is curious to see that healthcare systems are still hesitating to decide if AI in pathology can bring benefit to patients.

The impact on the individual physician

For physicians who make a diagnostic error, the landscape is changing fundamentally:

Ultimate responsibility remains, but is more demanding

Legally, the physician remains responsible for the final diagnosis. However, in the presence of AI, there is an expectation that its output is actively considered. Ignoring AI may be interpreted as negligence in hindsight.Documentation and workflow become critical

Not only what decision is made, but how it is reached becomes legally relevant. Whether or not images were reassessed after AI feedback may influence liability.Increased liability risk in cases of disagreement

When AI is correct and the physician is not, this discrepancy is likely to weigh heavily in court. Studies show that laypeople (such as jurors) tend to place greater trust in what appears to be an objective algorithm.Defensive medicine takes on a new dimension

Physicians may be inclined to follow AI recommendations more closely or order additional tests to mitigate legal risk, with potential consequences for healthcare costs and patient care.

Toward a new standard of care?

A key question is whether - and when - the use of AI itself will become part of the standard of care. If AI demonstrably outperforms humans in certain detection tasks, failing to use it may eventually be considered as problematic as ignoring it. The example of AI used in Gleason grading illustrates this case perfectly.

This creates a paradox:

Not using AI → potential negligence

Using AI but ignoring it → potential negligence

Following AI blindly → risk of overdiagnosis and new types of errors

Conclusion

AI is not only transforming how diagnoses are made, but also how errors are judged. For physicians, this means that medical liability is becoming less about clinical expertise alone and increasingly about the interaction with technology.

Radiology already demonstrates that the perception of error shifts once AI enters the equation, even when outcomes remain unchanged. In digital pathology, this dynamic is likely to emerge as well.

The implication is clear: in a world where the machine may be right, physicians must not only strive to make the correct diagnosis, but also ensure that their use of AI is defensible from a legal standpoint.

References

Bernstein, M.H., Sheppard, B., Bruno, M.A. et al. The radiologist–AI workflow and the risk of medical malpractice claims. Nat. Health1, 386–389 (2026). https://doi.org/10.1038/s44360-026-00085-2

Bulten, W., Kartasalo, K., Chen, PH.C. et al. Artificial intelligence for diagnosis and Gleason grading of prostate cancer: the PANDA challenge. Nat Med28, 154–163 (2022). https://doi.org/10.1038/s41591-021-01620-2

Table